HabitRPG

There’s always a fine line in grad school between using productivity hacks to procrastinate and finding the right tools to add structure to what can be a very nebulous and unstructured experience. I have to watch this because I have a tendency to over-optimize at the best of times. On the other hand, I’m terrible at following a purely self-imposed schedule, so *some* hacks help.

My latest tool is HabitRPG. I haven’t been using it that long, but it has great promise. Way back when I first got the Nintendo Wii I used Wii Fit regularly—partly because it was when the sciatica was bad and following my own stretch routine meant I could walk and sit without pain, but that was just the required maintenance. One of the things that kept me using it regularly even when it wasn’t immediately necessary was the simple rewards. Getting up to perfect form on my chosen stretches, getting higher scores on the relevant balance games, and seeing the piggy bank go from bronze to silver to gold—all were highly motivating. But then the piggy bank stopped changing and I maxed out on the games/stretches that I used regularly, and I got impatient with the clunky user interface and abandoned it.

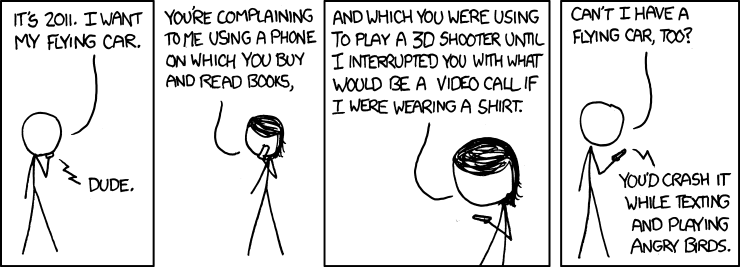

So I know the equivalent of gold stars—simple, barely meaningful indicators of progress—can work quite well for me. And I am a computing science geek, after all, and really like RPGs and leveling and unlocking achievements and charting progress in a visible way. So I’m giving this a shot.

I like the way it divides up the tasks in a functional and relatively simple way. Is it something you want to do systematically and get penalized for *not* doing? It’s a Daily. Is it something you want to do frequently but not on a specific schedule? It’s a Habit. Is it a one-off? ToDo. Done and done.

I like too that you can let the system sort out the rewards. If it’s a ToDo that has been hanging around for ages, I will get more experience for finally checking it off.

This all works well with my 3-must-do tasks rule. I have a daily for “Set day’s tasks”. Even on relaxing days, I find it helps to have an idea of what the things I *really* want to get done are. Usually this is housework or creative tasks or social events. But if I don’t get explicit with myself about what I’m going to prioritize, I thrash about which thing I should focus on, and feel guilty at the end of the day for not getting ALL! THE! THINGS! done. And on work days, I definitely have to get specific, or the day is lost to low priority things and, worst of all, picking up *new* tasks because I don’t have my goals firmly in mind.